The Language Game

"The limits of my language mean the limits of my world."

— Ludwig Wittgenstein

I started reading Wittgenstein a few months ago. Not because someone assigned it. I had been exploring memetics, trying to understand how ideas replicate and mutate, and I kept landing on references to this Austrian philosopher who had apparently dismantled the entire Western understanding of how meaning works. So I picked up the Philosophical Investigations.

I got through about fifty pages. His writing is incredibly dense and I am not the best reader. I have never been. Sitting with a book for hours takes a lot from me. But those fifty pages were enough to learn his language, enough to get the basic grammar of his thinking. And then I did what I do with everything now: I took those concepts into conversations with LLMs and started exploring the idea space through them. Digging, connecting, pressure testing. After enough time talking to Claude and GPT, building memories, the shared language is already there. I can load the system behind the writing faster than from the page alone.

I say this because I think transparency matters more than credibility. I am not a philosopher. I am not an expert in Wittgenstein or in anything, really. I am an engineer, builder, creator. I do not know what to call myself anymore. I run four or five agent sessions at once across different codebases. It is a completely new era of software development and I feel the vertigo of it every day. But let's go.

Here is what I believe. Philosophy is going to play a crucial role for anyone who wants to stay ahead of what is happening. Not academic philosophy. Not credentials. But the kind of philosophical grounding that helps you understand the nature of what you are working with. Skill transference is a real thing, and philosophy can give builders and creators a foundation they are going to need moving forward. Specifically: we need to understand machines well enough to feel something like empathy for how they process the world. Not anthropomorphize them. Understand them. Because if we do not, we will always be stuck on the sycophantic side of the relationship, treating them either as magic oracles or as dumb tools, and both framings miss what is actually going on.

Ludwig Wittgenstein worked in the first half of the twentieth century. He is widely considered one of the most important philosophers of language and meaning. His early work, the Tractatus Logico-Philosophicus, tried to define the logical structure of language, to draw a clean map between words and the world. Then he spent decades dismantling his own framework. His later work, the Philosophical Investigations, published after his death in 1953, argues something radical: meaning is not a picture of reality locked inside your head. Meaning is use. Words get their meaning from how they are used within shared practices, shared contexts, shared activities. He called these language games.

Here is the practical problem that brought me to this. Right now I am working with Claude 4.6 and GPT Codex 5.3 Max on a project that requires Three.js 3d visualizations, custom shaders, particle systems. I know very little about this domain. I have touched it before but I am far from literate. The model can write entire applications. It can refactor thousand-line files, reason about architecture, hold complex state in its head. It is, by most metrics, an extraordinarily capable programmer. And yet. Ask it to nail a specific shader effect from scratch, or get a particle system to feel right, and you will spend hours going in circles. "Make it more fluid." "No, the particles should feel organic." "That's not what I mean by porous." The model tries. It produces something. It is wrong in ways that are hard to articulate and even harder to fix through conversation alone.

This is not a capability problem. It is a language game problem.

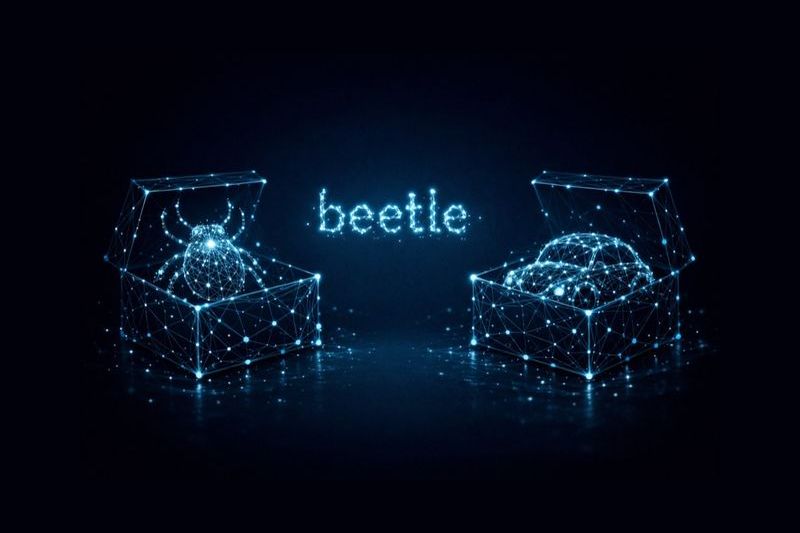

Pointing at the beetle

In the Philosophical Investigations, Wittgenstein asks you to imagine that everyone has a box with something inside it. Everyone calls the thing in their box a "beetle." But no one can look inside anyone else's box. The word "beetle" functions in the language regardless of what is actually in the box, or whether there is anything in the box at all.

When you prompt a model to generate a visual UI component, you and the model each have a beetle. You say "dropdown with a smooth animation." In your box: a felt sense, a visual memory, a specific behavior you have seen and want to reproduce. In the model's box: a probability distribution over tokens shaped by training data. These two beetles do not need to match for the word to function. But for the output to match your intent, something else has to happen.

That something else is the construction of a language game.

Code as shared form of life

Wittgenstein called the shared practices in which language operates a form of life. Not an abstract concept. The actual activities, contexts, and patterns of use that give words their meaning. When someone on the field yells "offside," every player, referee, and spectator knows what it means. But the word only works because the game is being played. Outside of futbol, "offside" means nothing. The meaning lives in the shared activity.

When you start a project from zero with an AI agent, there is no shared form of life. You say "card component" and the model reaches into its training distribution. You say "make it feel snappier" and the model has no referent. You are both using the same words inside different games.

But something changes once the codebase exists.

Once there is a CardComponent.tsx with specific props, specific styles, specific behavior, the code becomes the shared ground. Not metaphorically. The code is the form of life. When you now say "the card component," you are not gesturing at a concept. You are pointing at a concrete, shared object that both you and the model can inspect, reference, and reason about. Your label in your mind maps onto a structure in the model's context. The game is synchronized.

Ostensive definition through code

Wittgenstein spent many pages on the problem of ostensive definition: the act of pointing at something and naming it. He showed that pointing is radically ambiguous. You point at a red ball and say "red." Do you mean the color? The shape? The material? The fact that it is a single object? Pointing alone cannot establish meaning. The context of the language game determines what the pointing means.

This is exactly what happens in the iterative loop of building with an AI agent. Early on, your pointing is ambiguous. "This section feels off." Off how? Visually? Structurally? Semantically? The model guesses. Usually wrong.

But as the codebase grows and you keep working together, the pointing gets sharper. Not because the model suddenly understands your qualia. It never will. But because when you say "this dropdown," the model can see the dropdown in the code. When you say "this animation," there is a CSS transition or a spring config that instantiates what "this animation" means within this particular game. When you say "the way the graph collapses when you click a node," the model can trace from the click handler through the state update to the render function and understand what you are pointing at, not through vision but through code.

The code is the ostensive definition. It resolves the ambiguity of pointing by providing a shared, inspectable structure that both parties can navigate.

Synchronization is the work

Here is what follows from this. The quality of what you produce with an AI coding agent is not primarily a function of the model's capability. It is a function of how synchronized your language game is.

This explains why it is easier to iterate on an existing codebase than to start from nothing. It is not just "more context." It is that an existing codebase is a language game with established terms, grounded references, and shared patterns of use. Every prior interaction, every component named, every behavior debugged, every "no, not like that, like this" exchange has added a move to the game that thickens the shared grammar.

But this only works if the codebase is legible. Files that have grown into thousand-line walls of tangled logic are also a language problem, just in the other direction. The shared ground is there, but the model cannot move through it. Too much noise, too little structure. The game exists but neither player can find the board.

This also explains why prompt engineering in the shallow sense (writing more detailed instructions) hits a ceiling. You can write a paragraph describing exactly what you want the animation to look like. But if there is no shared referential frame, the paragraph is just more ungrounded words. Detailed instructions inside an empty game are still moves in no game at all.

The real work is building the game. Naming things. Establishing references. Creating components that serve as anchors for future pointing. Each cycle of building, inspecting, correcting, and rebuilding is not just iteration. It is a move in a language game that progressively synchronizes two radically different ways of processing the world: yours (visual, embodied, felt) and the model's (structural, statistical, syntactic).

The bridge

What makes code special here is not that it is precise (natural language can be precise too) but that it is bimodal. Code is the one artifact that is simultaneously meaningful to both parties in different but functionally compatible ways. You read the code and see behavior, layout, interaction. The model reads the code and sees structure, patterns, dependencies. Neither reading is the "real" one. But both readings converge on the same object, and that convergence is what makes coordination possible.

The code is the bridge between two incommensurable ways of seeing. Not a translation from one to the other. A shared surface that both can stand on while looking at it from their own side.

Wittgenstein would not have been surprised by any of this. He spent decades arguing that meaning is not in the head. It is in the practice. It is in the use. It is in the game. The fact that one of the players is now a machine running on probability distributions over tokens does not change the structure of the argument. If anything, it makes the argument more visible. Because when the game breaks down between a human and a model, you can see it breaking in real time. You can watch the words fail to connect. And you can watch them start working again, one grounded reference at a time, as the shared game comes into existence.

The real work is building the game.

Notes from Inside the Flood, 2026

References:

- Wittgenstein, Ludwig. Philosophical Investigations (1953)

- Wittgenstein, Ludwig. On Certainty (1969)